AI in legal practice 2026: ChatGPT and Copilot are the new standard.

In two years, artificial intelligence has become a regular part of Czech legal practice. The pace of adoption is unprecedentedly fast, but still relatively shallow. A survey of 170 lawyers shows which tools lawyers actually use and how their expectations are shifting.

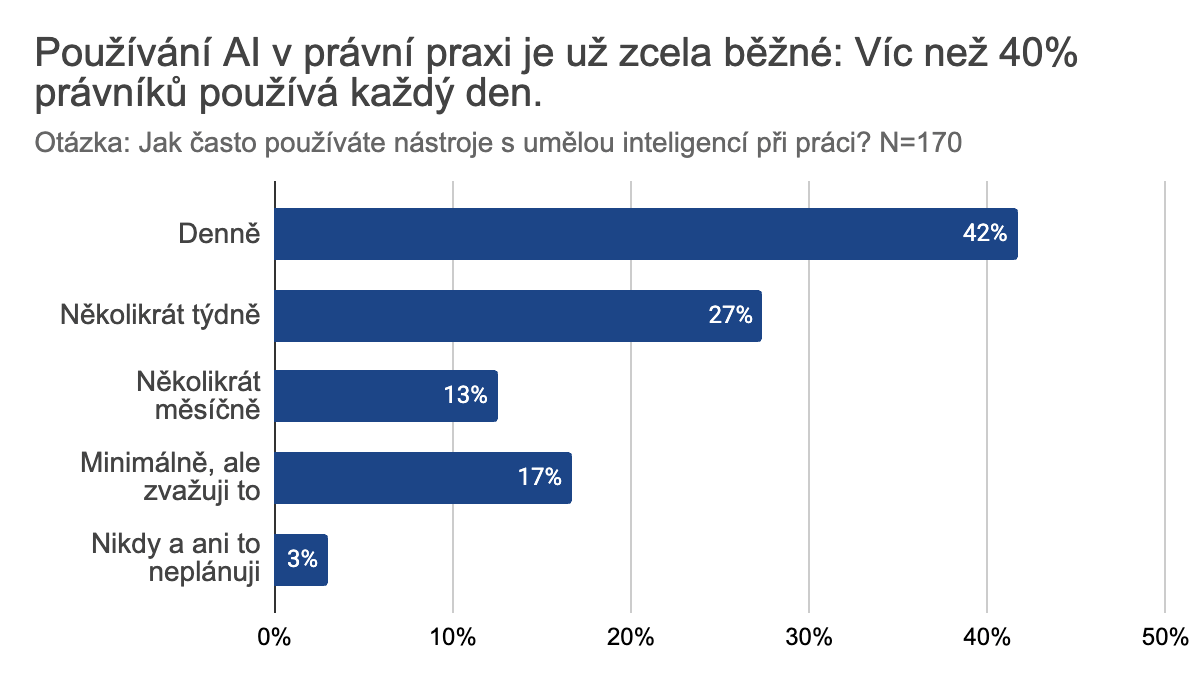

7 out of 10 lawyers use AI several times a week

The majority of lawyers in Czechia now use AI based on language models. Seven out of ten say they work with AI at least several times a week. One third, daily.

Going from zero to 70 % in three years is a nearly unprecedented adoption pace for the legal profession. Comparable speed was seen only for the switch from paper law collections to electronic databases, or from mail/fax to e-mail. Even there, the change stretched over five to ten years — not three.

The speed of change means some parts of the legal ecosystem are only now catching up. Thorny topics emerge — how to bill for work not done by a human, how to tell genuine quality from an output that merely looks formally correct. To some clients AI assistance can still feel like an unwanted shortcut, even though it opens up the possibility of a far deeper review of the case. To others, AI use is becoming part of a lawyer's professional diligence.

What to automate? The biggest potential lawyers see is in research. Accuracy is the main barrier.

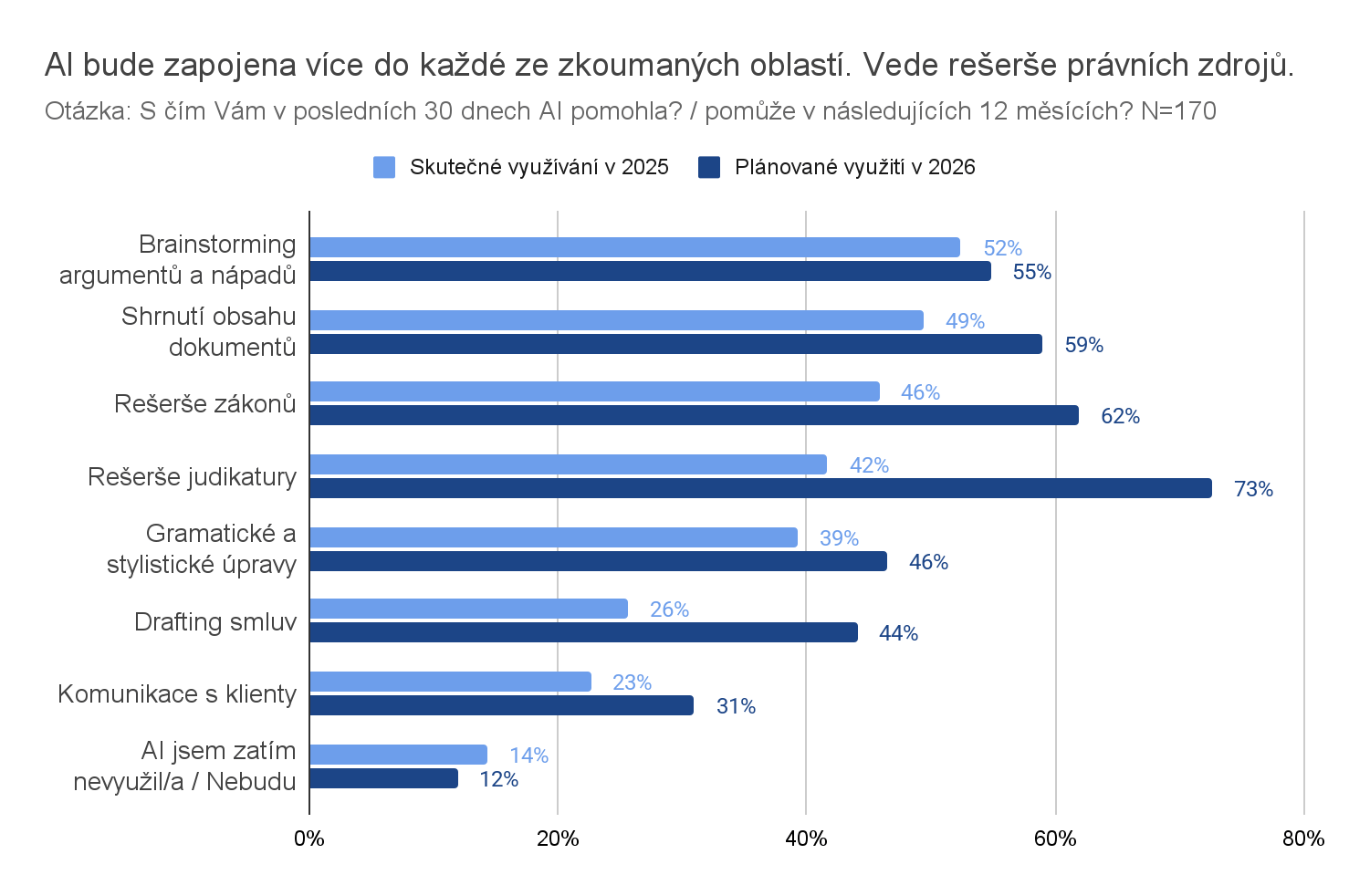

Today half of respondents use AI for brainstorming and document summarisation. 4 out of 10 use AI to research statutes and case law or to make grammatical and stylistic edits.

As the professional public rapidly gains experience, views on where AI will be applied next year are shifting markedly. The most trust is placed in case-law research, where up to three quarters of respondents plan to use AI. Interest in AI-assisted contract drafting has grown sharply. Growth is visible in every area surveyed.

Only twelve percent of respondents said they do not plan to use AI in the next twelve months. Although nearly everyone plans to use it, the actual level of adoption will depend on whether the tools provide sufficient accuracy, source citations, and legal certainty. Plan and reality can therefore diverge significantly.

What would motivate lawyers to use AI more often? First: the quality of legal outputs (73 %). Second: guarantees of security and confidentiality (54 %). Third: integration into already-used tools and processes (35 %).

Lawyers mostly use legal tools without AI features, or AI tools without legal specialisation

Only 35 % of lawyers are in the intersection of both.

We divided legal tools into legal-specific systems working with verified sources, and general models without the full legal context. Most of the legal systems in use pre-date the breakthrough in generative AI. They rely on searching original texts by title/docket number or by keywords. 82 % of lawyers use such systems.

Some of these systems are adding AI features, mainly in the form of smarter search — 28 % of lawyers use it. Only 7 % have started using legal-specific systems built on AI language models.

So 35 % of all lawyers use AI in some form within legal-specific systems.

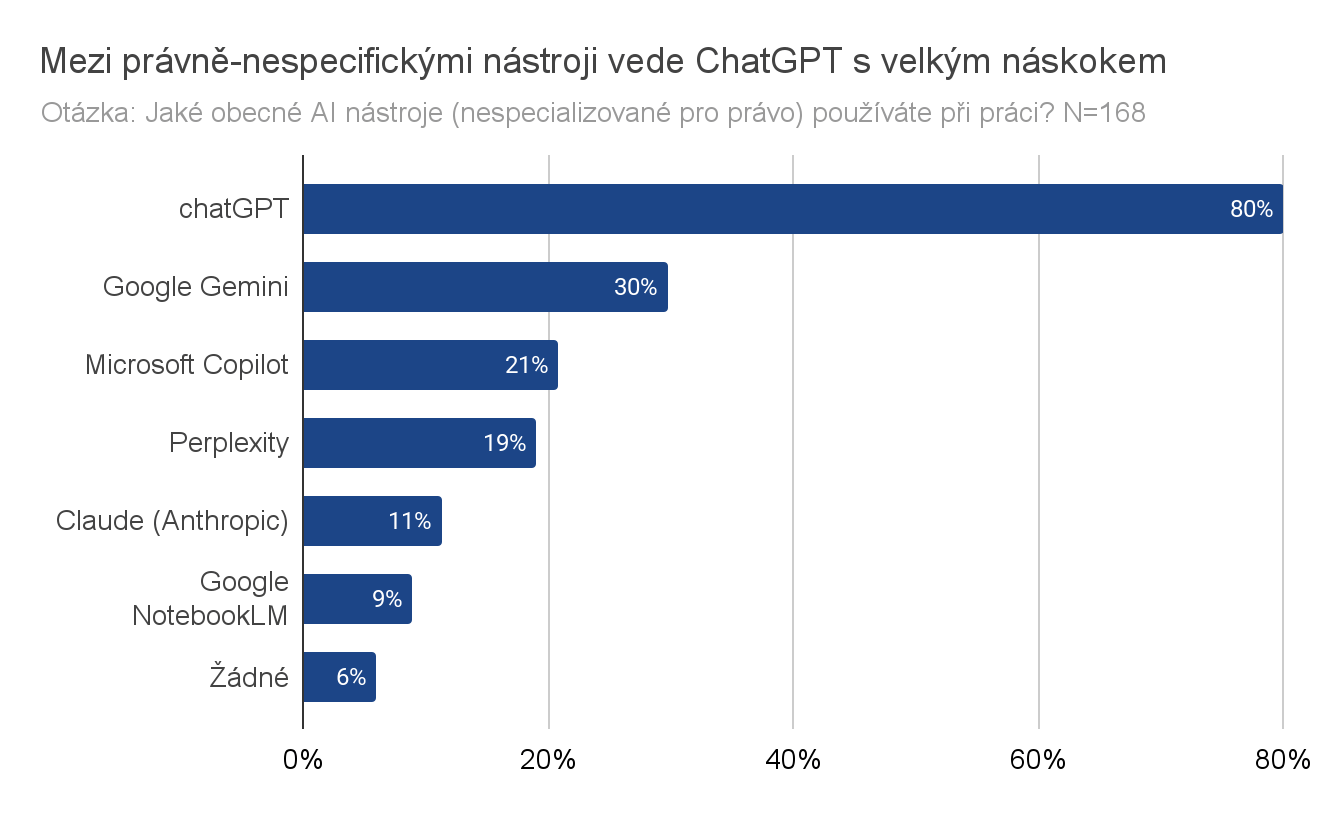

Use of general, non-legal models is much more widespread. Only single-digit percentages of respondents did not use any of them for a work task.

Among general AI models ChatGPT wins by a clear margin. For this tool we also examined whether respondents used a paid version or the free one — the split is roughly even. The free option clearly helps general tools' popularity.

Rapid change and pressure create risk

The gap between rapid AI adoption for some tasks and low use of AI specialised for law is one of the survey's most striking findings. Respondents expect research in complex document systems with high accuracy and legal certainty — while keeping sensitive data safe. The reality so far is mostly experience with general tools like ChatGPT or Gemini, which cannot fully meet these demands.

General, non-legal models draw knowledge from the open internet, where accurate information sits next to outdated texts and lay opinions. Some important legal sources may not be available at all. Conclusions may be wrong or include citations of laws or judgments that do not exist. Such errors are also hard to catch because the output looks formally correct at first glance. This creates huge risk for lawyer and client alike.

The whole market is in the middle of rapid technological change. Products that delivered legal information before the language-model breakthrough have not changed their underlying architecture. New products have not yet earned market credibility. And users are figuring out where AI actually helps and how to bring it into legal work so it becomes a support rather than just another tool on the list.

How to continue with AI technology easily and safely?

Try an AI tool suitable for law, or add legal context to general models.

When selecting a specialised AI tool, consider these 5 criteria:

- Quality and completeness of sources: working with current case law and legislation in full — not only Supreme Court case law but judgments of all court levels.

- Accuracy and verifiability of outputs: for legal research it is important that the tool provides exact citations and that it is easy to view the primary source.

- Integration into workflow: easy use in everyday workflow — via a web interface or integration with existing tools.

- Security and data handling: clear rules for handling queries, documents, and sensitive information, including their further use or storage.

- Pricing model and flexibility: AI technology changes fast; multi-year commitments can be a major drag.

General models (ChatGPT, Gemini, Claude) enriched with legal context

Even general tools can be used for more expert parts of legal work. You just need to supply them with complete, verified context. Either upload the specific text of a law or judgment via the interface, or connect the model to a context source. ChatGPT introduced the option of connecting to a specific — e.g. legal — context in mid-2025. Similarly Anthropic and Claude. It's called MCP (Model Context Protocol). Connecting is usually doable by any user, provided a service exists to connect to. DirectCase offers a service that supplies verified laws and case law in the context of Czech law.

Liked this article? Share it.